Robotics is a well established field and there are many successful deployments of robots for specialized tasks in controlled settings. For example, robots are used heavily in warehouse operations and manufacturing for decades. When the environments are controlled, robot deployments would work as of now. However, current best robots in the world would fail to adapt in a changing environments making realistic deployment impossible. In other words, roboticists could not create general purpose robots that exhibit intelligent behavior and adapt to the environments. This has severely restricted the adoption of consumer robots. However, the landscape of robotics is changing drastically after Foundation Models have become mainstream.

What are Foundation Models?

If we can understand a complete picture of how we got here to Foundation Models, we will be in a position to appreciate the trajectory of research and engineering efforts that got us here. Let's start examining the three types of "programming" computers to bake in "intelligence" into the computer.

People find the best program for each task

Computer programming dramatically changed the kinds of feats humanity could achieve. An early taste of software engineering is from the Apollo 11 mission which put man on the Moon! There may have been a team of researchers and engineers who worked on the software stack of the Appllo mission but Margret Hamilton, a PhD graduate in Math took a pioneering role in leading the team to deliver the software that successfully put man on the Moon [2]. Specifically, the software was on the guidance computer which handled all the complex manuevers which required complex calculations and precise timing. Saturn 5 rocket blasted off to the Moon with three astraunats on July 16, 1969 from the Kennedy Space Center.

By NASA - image on the Wayback Machine, abstract on the Wayback Machine, Public Domain, Commons

When the Apollo Lunar Module approached the surface of the Moon, the on-board computer was overloaded sending alarms to the crew.

Eagle (Lunar Module) in lunar orbit photographed from Columbia. By NASA - NASA website; description,1 high resolution image.2

As the computer was overloaded, the computer started shedding less important tasks while continuing to process calculations for the critical tasks. This was made possible by a key piece of Software on the Lunar Module. This exemplifies critical thinking and extensive testing of Software that would be necessary to achieve success in such high-stakes situations. Margret Hamilton was awarded the Presidential Medal of Freedom by President Barack Obama in 2016 for her contributions to the American space program.

"There was no second chance. We all knew that" -- Margret Hamilton

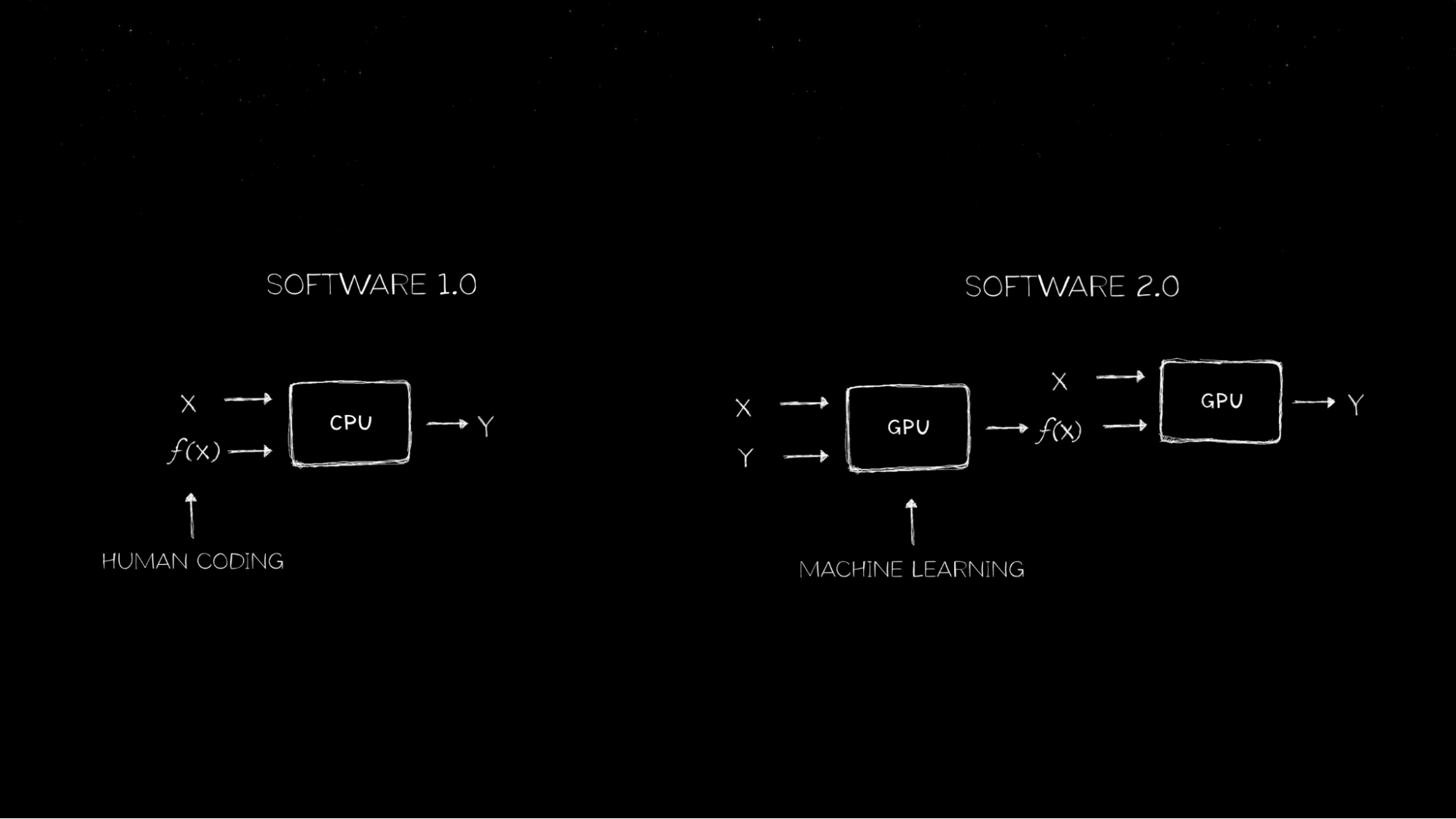

All this is the realm of Software 1.0 [1] where computers were explicitly programmed. As you can see, a lot humanity's achievements were and are still possible with great Software Engineering.

Computers find the best program for each task

Writing explicit programs (Software) remains and will remain a significant effort to build reliable systems, a new paradigm emerged to solve problems that cannot be solved with explicit programming. For example, can you write a program to open a gate when someone comes close to the gate? Yes, you need a sensor to detect if someone comes close to the gate and once you "see" someone close to the gate, you can switch on a motor that will open the gate. This is an "intelligent" behavior (or not if you opened the gate for a pirate :D ) that you could explicitly programmed. Now, you are asked to open the gate only for cars and not for trucks. How would you program this new "intelligent" behavior to your system? Can you write a program that can detect cars and trucks? This is the exact problem that the filed of Machine Learning evolved to solve as shown in the image below!

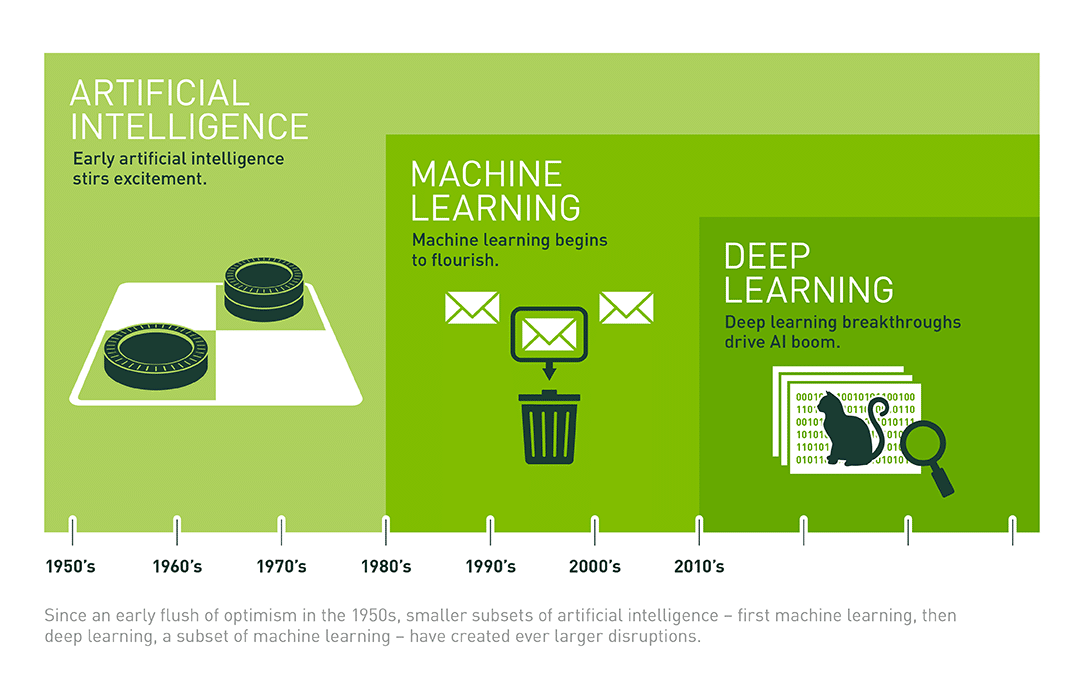

Image Source: For more information, see NVIDIA's blog post

Image Source: For more information, see NVIDIA's blog post

This a really nice depiction that emphasizes the learning a function f(x) to perform a task. Imagine that a function takes inputs and returns an output. This function was explicitly programmed by you for the intelligent gate example above. But this explicit programming will fall short for the new demand of opening the gate only for cars and not for trucks. Imagine how you could solve this problem. You probably need to learn the function f(x) by learning from various examples of cars and trucks shown by Machine Leaning under Software 2.0 part of the image. This paradigm of programming computers still need data for each task. For example, if you are now asked to open the gate only for people and not for any vehicles, you will have start from scratch. That is, you will first need to collect images of people and vehicles in various lighting, angles, and variations. Later, find the new f(x) from this data using the input data (input X) and labels (input Y). Note that when we collect data, we would know if the input (X) is a person or a car which is the label (Y) for the data point.

Image Source: For more information, see NVIDIA's blog post on Deep Learning

Image Source: For more information, see NVIDIA's blog post on Deep Learning

Baking in intelligence into computers has always fascinated researches and engineers since the inception of computing. The image above depicts the timeline of Artificial Intelligence and the emergence of Machine learning and the specialized filed of Deep Learning in the last few decades. After 2012 when AlexNet took over the field of deeplearning on the ImageNet challenge, the field of Deep Learning continued to demonstrate success in domains such as computer vision, natural language processing, and reinforcement learning.

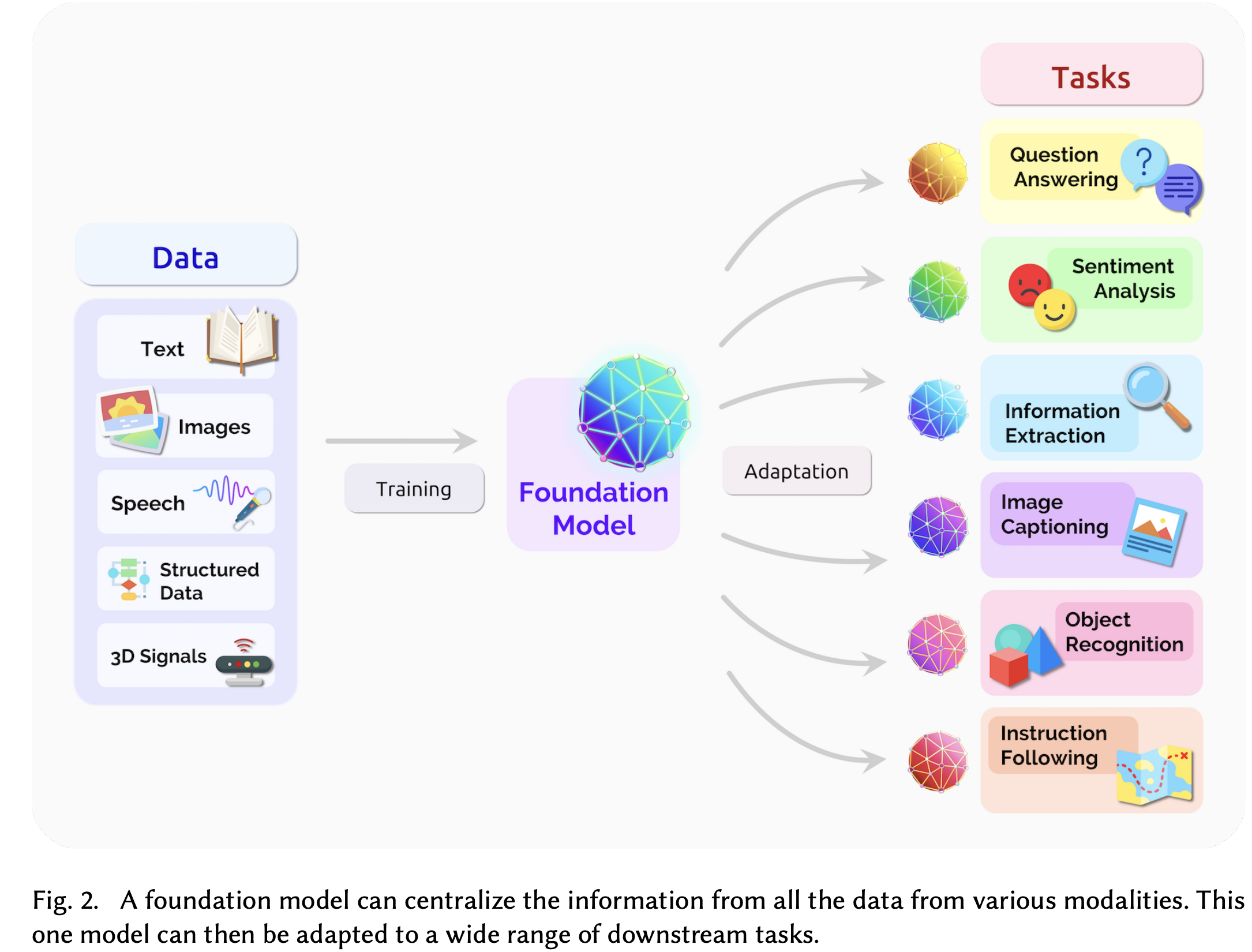

Computers find a program that can be adapted to multiple tasks

Traditionally, machine learning requires data collection for the specific problem being solved, model training, and finally deployment. This significantly increases the effort to create machine learning solutions to new problems. Even with such a paradigm, machine learning especially deeplearning is extensively used in real-world deployments spanning multiple domains. What if you train a machine learning model once and deploy multiple times? This is exactly the promise of foundation models. The foundation models are trained on massive web-scale data and the foundation could be used without re-training (zero-shot) or with minor finetuning (this still requires less data compared to training the model from scratch).

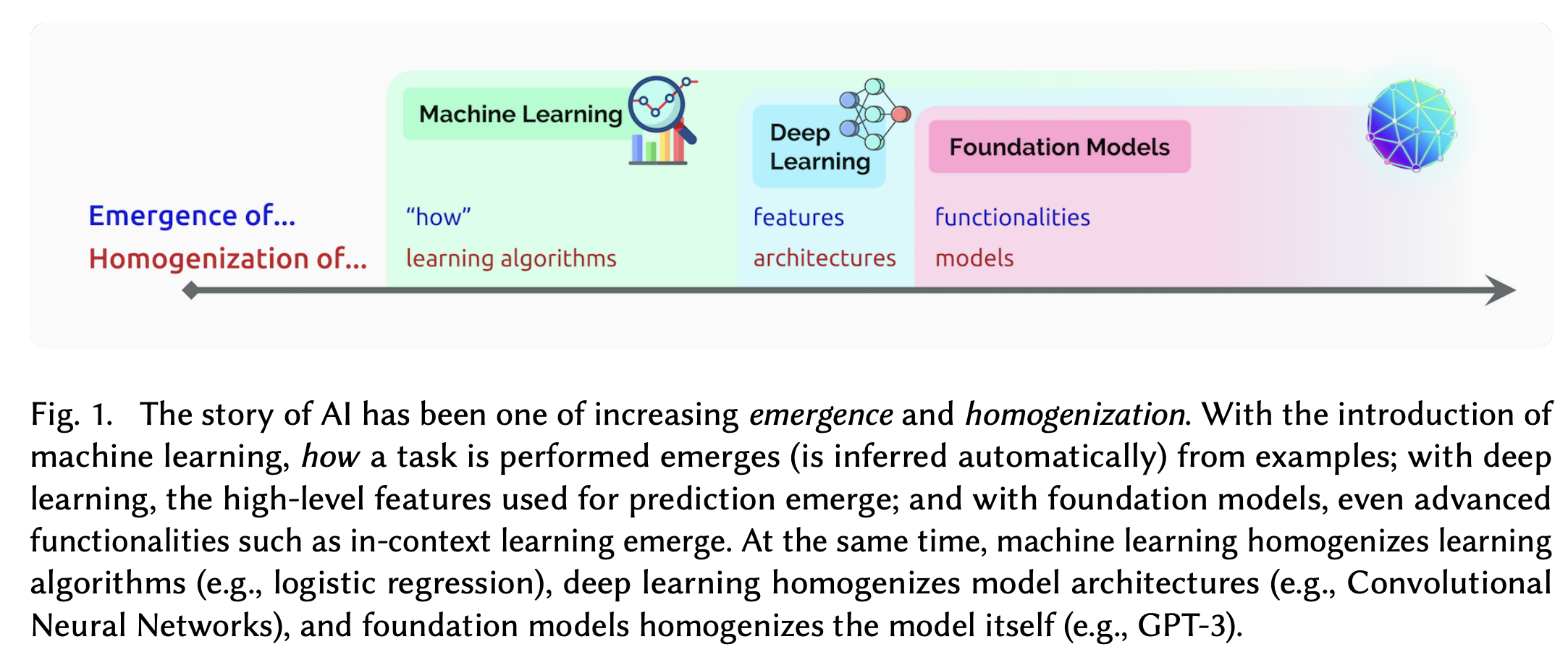

Emergence of Foundation Models.

Image: On the Opportunities and Risks of Foundation Models.,1

Emergence of Foundation Models.

Image: On the Opportunities and Risks of Foundation Models.,1

Foundation Models trained once with massive compute can be adapted to various tasks with minimal changes.

Image: On the Opportunities and Risks of Foundation Models.,1

Foundation Models trained once with massive compute can be adapted to various tasks with minimal changes.

Image: On the Opportunities and Risks of Foundation Models.,1

Impact of Foundation Models on Robotics

Robotics has seen many successful real-world deployments since decades but has accelerated in the last decade due to the advancements in Deeplearning, reinforcement learning, and hardware. Robots are heavily used in logistics, manufacturing, agricultural operations, mining, search and rescue, construction, and many other domains where the environment is structured and variations are controlled [4]. Robotics has been called with different names and the two of them include Embodied AI and Physical Intelligence. Embodied AI market is said to reach $370B by 2040 [4] which emphasizes a strong potential for innovation and growth.

References

- https://blogs.nvidia.com/wp-content/uploads/2024/10/software1.02.0.png

- https://airandspace.si.edu/amp-stories/margaret-hamilton/

- Bommasani, Rishi et al. “On the Opportunities and Risks of Foundation Models.” ArXiv abs/2108.07258 (2021): n. pag.

- Will embodied AI create robotic coworkers? https://www.mckinsey.com/industries/industrials/our-insights/will-embodied-ai-create-robotic-coworkers

Stay curious. Stay updated.

Subscribe to get new blog posts and updates on physical intelligence and robotics. When we launch courses for kids, you'll be the first to know.